Our experiments consistently demonstrated that 1) the use of a pre-trained CNN with adequate fine-tuning outperformed or, in the worst case, performed as well as a CNN trained from scratch 2) fine-tuned CNNs were more robust to the size of training sets than CNNs trained from scratch 3) neither shallow tuning nor deep tuning was the optimal choice for a particular application and 4) our layer-wise fine-tuning scheme could offer a practical way to reach the best performance for the application at hand based on the amount of available data. In this paper, we seek to answer the following central question in the context of medical image analysis: Can the use of pre-trained deep CNNs with sufficient fine-tuning eliminate the need for training a deep CNN from scratch? To address this question, we considered four distinct medical imaging applications in three specialties (radiology, cardiology, and gastroenterology) involving classification, detection, and segmentation from three different imaging modalities, and investigated how the performance of deep CNNs trained from scratch compared with the pre-trained CNNs fine-tuned in a layer-wise manner. However, the substantial differences between natural and medical images may advise against such knowledge transfer. A promising alternative is to fine-tune a CNN that has been pre-trained using, for instance, a large set of labeled natural images. Say, just like in Backpropagations, we derive the gradients moving from right to left at each stage. The pooling layer takes an input volume of size w1×h1×c1 and the two hyperparameters are used: filter and stride, and the output volume is of size is w2xh2xc2 where w2 (W1F) / S+1, h2 (h1. When we solve for the equations, as we move from left to right, (‘the forward pass’), we get an output of f -12. grads and params are calculated above while we choose the learning_rate.Training a deep convolutional neural network (CNN) from scratch is difficult because it requires a large amount of labeled training data and a great deal of expertise to ensure proper convergence. Computational Graph of f qz where q x + y.

We take the gradients, weight parameters, and a learning rate as the input. Updated parameters are returned by updateParameters() function.

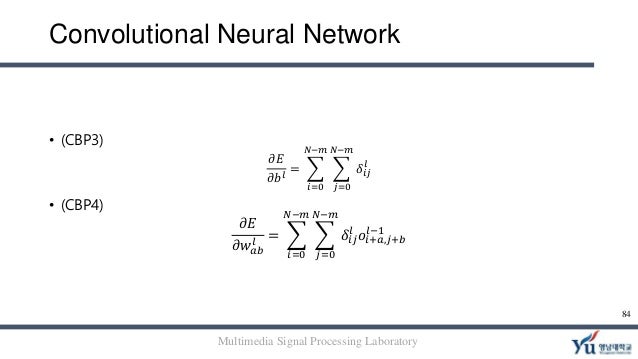

To compute backpropagation, we write a function that takes as arguments an input matrix X, the train labels y, the output activations from the forward pass as cache, and a list of layer_sizes. More specifically, we have the following: Our neural net has only one hidden layer. During backpropagation (red boxes), we use the output cached during forward propagation (purple boxes). This sharing of weights ends up reducing the overall number of trainable weights hence introducing sparsity. Neurons in CNNs share weights unlike in MLPs where each neuron has a separate weight vector. Generally, in a deep network, we have something like the following. It contains well written, well thought and well explained computer science and programming articles, quizzes and practice/competitive programming/company interview Questions. Convolutional neural networks (CNNs) are a biologically-inspired variation of the multilayer perceptrons (MLPs). We’ll write a function that will calculate the gradient of the loss function with respect to the parameters. Now comes the best part of this all: backpropagation!

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed